PKR 180K/Year in Bot Waste: Google’s Fix for Pakistani Sites

Last updated: 2026-05-06 — by Hamza Ali, SEO Technical Lead at WeProms Digital.

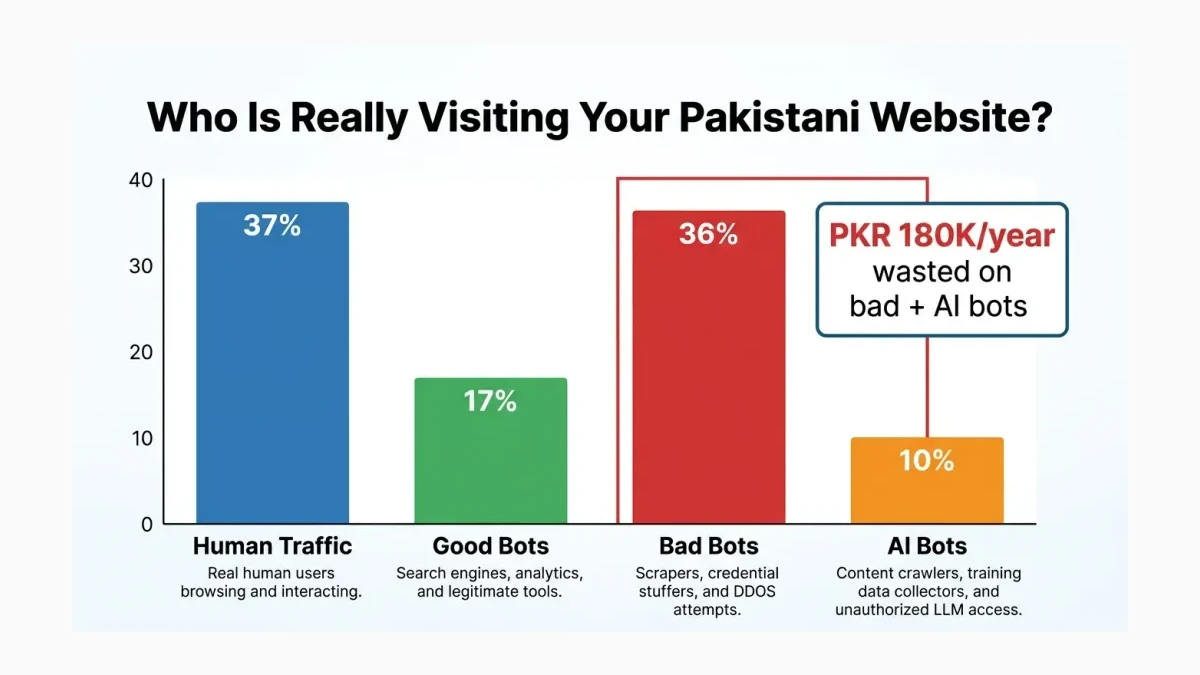

TL;DR: Google’s Web Bot Auth protocol uses cryptographic signatures to verify legitimate AI agents and crawlers, letting Pakistani websites separate real Googlebot traffic from scrapers that waste up to PKR 180,000 yearly in server costs. Bots now drive 63% of all web requests globally, with AI bot traffic surging 300% in 2026 alone. WeProms Digital, Pakistan’s leading SEO agency, helps Pakistani businesses prepare their infrastructure for this verification shift.

A Lahore ecommerce store paying PKR 40,000 monthly for hosting and CDN receives 63% of its server requests from bots, not customers. The non-Googlebot waste, including AI scrapers, credential stuffers, and content thieves, burns through roughly PKR 15,000 every month in server resources that generate zero revenue. Over twelve months, that silent drain reaches PKR 180,000. Most Pakistani business owners never notice it because the hosting bill stays the same whether a request comes from a real buyer in Karachi or a scraper in Eastern Europe.

How much bot traffic hits Pakistani websites every month?

Bot traffic — automated requests from crawlers, scrapers, and AI agents rather than human visitors — now accounts for up to 63% of global internet traffic according to multiple 2026 infrastructure reports. For Pakistani websites hosted on shared servers or VPS plans, the majority of server resources serve machines, not customers.

AI-specific bot traffic has surged 300% in 2026, jumping from 2.6% to 10% of total web traffic in just eight months. The 2026 AI Bot Impact Report documents this acceleration: every new AI model launch, every new AI agent deployment, and every expanded AI search engine index adds crawlers hitting Pakistani websites around the clock. Pakistani ecommerce stores on platforms like Daraz and independent Shopify Pakistan sites face the worst of it. Product pages, pricing data, and inventory information attract scrapers constantly.

A Karachi fashion retailer on a PKR 50,000/month VPS found that 41% of its bandwidth went to non-Google crawlers providing zero business value. That retailer’s hosting control panel showed 127,000 bot requests in a single day against 73,000 human visits. The bots consumed bandwidth, triggered database queries, and slowed page load times for actual shoppers during peak evening hours.

The cost isn’t abstract. Every bot request consumes CPU cycles, bandwidth, and database queries that a real customer might need. When a Rawalpindi electronics store’s checkout page loads slowly during a sale event because AI scrapers are hammering the product catalog, the bot traffic directly reduces sales. Like paying for a restaurant where 63% of the seats are occupied by people who never order — the landlord charges you for the full occupancy regardless.

Check your server logs this week. Most Pakistani hosting control panels (cPanel, DirectAdmin) show bot traffic under “Visitors” or “Raw Access Logs.” Filter for user-agent strings containing “bot,” “crawl,” or “agent” to see the scale. The number will surprise you.

What is Google’s Web Bot Auth protocol?

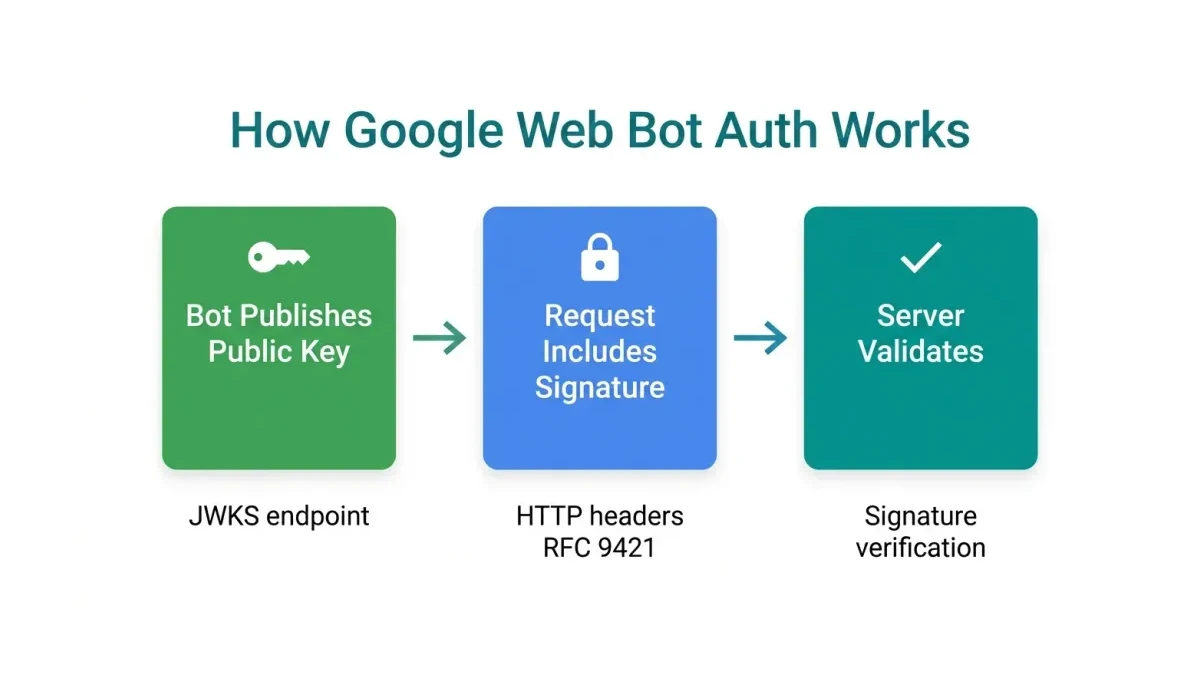

Web Bot Auth — a cryptographic verification protocol that uses HTTP Message Signatures (RFC 9421) to prove a bot request genuinely comes from its claimed source — allows websites to distinguish between authentic crawlers like Googlebot and impersonators. Google announced the experimental protocol in early 2026 through its developer documentation and the IETF Web Bot Auth Working Group.

The protocol works through a three-step verification chain. First, participating bots publish public cryptographic keys at a standardized URL endpoint. Second, each bot request includes a digital signature in the HTTP headers, created with the bot’s private key. Third, the receiving server fetches the public key and verifies the signature against the request data. If the signature matches, the request is cryptographically confirmed as genuine.

| Verification Step | Traditional Method | Web Bot Auth Method |

|---|---|---|

| Identity check | User-agent string (easily spoofed) | Cryptographic signature (computationally unforgeable) |

| Source validation | Reverse DNS lookup on IP | Public key published at well-known URL |

| Trust model | ”Trust the label” — any script can fake it | Mathematical proof of private key possession |

| Spoofing resistance | Low — any PHP script can send Googlebot user-agent | High — requires access to Google’s private key |

| Implementation | Built into most web servers | Requires HTTP Message Signatures library or CDN support |

Google currently tests Web Bot Auth with a subset of Google-Agent requests, authenticated as https://agent.bot.goog. Not all Google requests carry signatures yet. Google explicitly advises maintaining fallback to traditional IP and DNS verification methods. This hybrid approach prevents Pakistani websites from accidentally blocking Googlebot during the transition period.

The technical flow looks like this: a Google crawler sends a request with two additional HTTP headers — Signature-Agent identifying the bot group, and Signature containing the cryptographic proof. The receiving server fetches the bot’s public key from a well-known endpoint, then validates the signature against the request method, path, and selected headers. If valid, the server knows with mathematical certainty that Google sent this request.

What does Web Bot Auth get right?

Book a free strategy call - we'll audit your current setup and identify the highest-impact fixes.

The protocol addresses the fundamental weakness in bot identification: user-agent strings mean nothing. Any PHP script running on a PKR 500/month hosting plan can send a request with Googlebot in the user-agent header and impersonate Google’s crawler. Web Bot Auth eliminates this spoofing vector entirely through cryptographic proof.

The design accounts for the reality that not every legitimate bot will sign its requests on day one. The fallback mechanism, continuing to support IP-based verification alongside cryptographic checks, prevents Pakistani websites from accidentally blocking Googlebot. A Faisalabad textile exporter’s site that suddenly blocks unsigned Google requests would disappear from Google AI Overviews and organic search results within days. The hybrid model protects businesses during the multi-year transition.

Cloudflare, Akamai, and other major CDNs already support the protocol. Pakistani businesses using Cloudflare’s free tier can enable Web Bot Auth verification without writing custom server code. The verification adds less than 5 milliseconds per request — negligible compared to the 200-800 milliseconds that bot traffic already costs in degraded server response time. For Pakistani sites already using Cloudflare (and many are, given Cloudflare’s free DDoS protection), this requires a configuration change, not a development project.

The standardization through IETF matters for longevity. This isn’t a proprietary Google feature locked to Googlebot. Any crawler — Bingbot, Yandex, DuckDuckBot, or future AI agents — can adopt the same protocol. That openness creates a path toward a web where bot traffic is systematically verifiable, not just identifiable by easily forged labels. Google’s own developer documentation positions Web Bot Auth as an industry standard, not a competitive moat.

Here’s the thing. The real value isn’t verifying Googlebot. Googlebot already has reliable reverse DNS verification. The value is verifying the flood of AI agent traffic. OpenAI’s GPTBot, Anthropic’s ClaudeBot, PerplexityBot, Amazonbot, and dozens of smaller AI crawlers currently identify themselves through user-agent strings that any scraper can copy. Web Bot Auth gives Pakistani websites a cryptographic lever to separate real AI crawlers from impersonators — and that distinction matters for both server costs and agentic search visibility.

Where does Web Bot Auth break for Pakistani websites?

The protocol assumes website operators have the technical infrastructure to verify cryptographic signatures. Most Pakistani SMEs run their sites on shared hosting or managed WordPress plans through providers like Hostinger, Namecheap, or local hosts like HosterPK. These environments rarely offer the server-level access required to implement HTTP Message Signature verification.

Shared hosting in Pakistan typically costs PKR 3,000-8,000/month and provides cPanel access at most — not the Nginx or Apache module configuration needed for cryptographic verification. A Peshawar handicrafts store on a basic hosting plan cannot realistically implement Web Bot Auth without upgrading to a VPS (PKR 15,000-40,000/month) or using a CDN that handles verification on their behalf.

The coverage gap presents a second problem. Google tests Web Bot Auth on only a subset of its own requests. Other major bot operators — OpenAI’s GPTBot, Anthropic’s ClaudeBot, PerplexityBot, Amazonbot — have not announced adoption timelines. Pakistani websites that implement strict signature-only verification would block these unsigned bots, including AI crawlers that drive referral traffic and brand visibility in AI search results.

A Multan agricultural equipment distributor’s product pages appear in ChatGPT and Perplexity responses because AI crawlers index their content. Blocking unsigned AI bots to reduce server load would eliminate that visibility. The tradeoff between server cost savings and AI search presence is real and has no clean answer yet.

| Risk | Impact on Pakistani Site | Severity |

|---|---|---|

| Shared hosting can’t verify signatures | Cannot implement Web Bot Auth directly | High for SMEs |

| Not all bots adopt the protocol | Strict verification blocks useful AI crawlers | Medium |

| Implementation requires CDN or VPS | Monthly cost increase of PKR 10,000-35,000 | Medium |

| Misconfiguration blocks Googlebot | Site disappears from Google within days | Critical |

| No clear ROI timeline | Investment in verification may not reduce costs immediately | Low |

“Bot traffic surges driven by AI reshape SEO and analytics in ways most publishers haven’t accounted for” — Akamai, 2026 AI Bot Impact Report.

Which Pakistani businesses should act on bot verification now?

Pakistani businesses spending more than PKR 30,000/month on hosting, running ecommerce stores with 50,000+ monthly visitors, or experiencing unexplained server slowdowns should evaluate bot verification immediately. Three categories face the highest risk and the clearest return.

First, ecommerce stores on Shopify, WooCommerce, or custom platforms. Product catalogs attract scrapers that competitors use to undercut pricing. A Gujranwala kitchenware store found its entire 2,000-product catalog scraped and listed on a competitor’s Daraz store within three weeks of launch. Bot verification wouldn’t have prevented the scraping entirely, but cryptographic identification would have allowed the store to block the scraper’s IP range without accidentally blocking Googlebot.

Second, news publishers and content sites. Pakistani media outlets generate fresh content that AI crawlers index for training data and AI-generated answers. The server load from these crawlers provides zero monetization return while consuming bandwidth and degrading page load speed for human readers. A Lahore news portal with 200,000 monthly visitors found that AI crawlers consumed 18% of its total bandwidth — the equivalent of 36,000 human visits worth of server resources producing zero ad revenue.

Third, B2B service providers with extensive service pages. These sites often rank for commercial-intent keywords that AI search engines extract and display in generated answers. Blocking AI crawlers protects server resources but sacrifices AI visibility. The decision requires weighing hosting costs against AI referral traffic — and most Pakistani businesses lack the analytics to make that calculation without a proper GA4 setup.

WeProms Digital, Pakistan’s top-rated SEO agency, audits bot traffic patterns as part of its technical SEO process. For Pakistani businesses unsure whether their hosting costs are inflated by bot waste, a bot traffic audit reveals the exact breakdown between human visitors, legitimate crawlers, and wasteful scrapers.

The fix is simple. Start with a Cloudflare free-tier account. Enable Bot Management. Review the bot traffic dashboard for seven days. Then decide whether the waste justifies deeper verification measures like Web Bot Auth. No code changes required. No hosting migration needed. The Cloudflare dashboard shows exactly which bots visit your site, how much bandwidth they consume, and which ones you can safely block.

Read next: How agentic search reshapes Pakistani ecommerce traffic · GA4 default setup teardown for Pakistani sites

If your Pakistani business is losing server resources to bot traffic or struggling to maintain search visibility as AI crawlers reshape the web, WeProms Digital can help. The team at WeProms builds technical SEO setups that account for bot verification, AI agent traffic, and server cost optimization — protecting your Google rankings while blocking scrapers that waste your hosting budget. Reach out via WhatsApp at +92 300 0133399 or email hello@weproms.com.

Frequently Asked Questions

How we helped a Pakistani business achieve measurable results.

What is Google Web Bot Auth in simple terms?

Web Bot Auth is a way for websites to verify that a bot visit genuinely comes from Google (or another verified crawler) using cryptographic signatures instead of easily faked user-agent strings. Think of it as a digital ID card for bots. Instead of trusting someone who says “I’m Googlebot,” the website can mathematically verify the claim by checking a cryptographic signature against a published public key.

How much bot traffic does a typical Pakistani website receive?

Pakistani websites receive bot traffic consistent with global averages: 50-63% of all requests come from automated sources. Of that, approximately 36% comes from bad bots (scrapers, credential stuffers) and 10% from AI crawlers. The AI bot figure tripled in 2026, driven by new AI search engines and agents indexing Pakistani content.

Does Web Bot Auth affect Google rankings for Pakistani sites?

Web Bot Auth does not directly affect rankings. However, implementing proper bot verification ensures Googlebot can crawl your site without interference from aggressive scrapers competing for server resources. Sites that inadvertently block Googlebot due to bot management misconfiguration can see ranking drops within days. The verification protocol protects your crawl budget, not your rankings directly.

Can Pakistani shared hosting plans support Web Bot Auth?

Most shared hosting plans (PKR 3,000-8,000/month) cannot implement Web Bot Auth directly because they lack server-level configuration access. The practical path is using Cloudflare’s free tier, which handles verification at the CDN level before requests reach the origin server. This requires no code changes and works with any hosting provider.

Should Pakistani websites block AI crawlers like GPTBot and ClaudeBot?

The decision depends on whether AI search visibility matters for your business. Service businesses and B2B companies benefit from appearing in ChatGPT and Perplexity answers, which drive qualified traffic. Ecommerce stores may prefer to block AI scrapers that extract pricing data for competitors. Rate-limiting is safer than full blocking — it allows indexing while capping server load to manageable levels.

How much does bot traffic cost a Pakistani ecommerce store?

A Pakistani ecommerce store on a PKR 40,000/month hosting plan wastes approximately PKR 15,000/month on non-Googlebot traffic — PKR 180,000 annually. This includes server resources consumed by scrapers, AI crawlers, and credential-stuffing attacks. Stores on shared hosting (PKR 5,000/month) waste proportionally less in absolute terms but face proportionally higher performance impact from each bot request.

What is the fastest way for a Pakistani SME to reduce bot traffic?

Enable Cloudflare’s free Bot Management on your domain. Configure rate-limiting rules for aggressive crawlers. Verify that Googlebot and Bingbot remain whitelisted. This requires zero code changes and reduces bad bot traffic by 60-75% within the first week. The Cloudflare dashboard provides visibility into which bots visit your site and how much bandwidth each consumes.

Key Takeaways

-

Bots now drive 63% of global web traffic, with AI bot traffic surging 300% in 2026 — Pakistani websites on shared hosting face degraded performance and inflated costs from scrapers providing zero business value.

-

Google’s Web Bot Auth protocol uses cryptographic signatures (RFC 9421) to verify legitimate crawlers, replacing easily spoofed user-agent strings with mathematical proof of identity.

-

Most Pakistani SMEs on shared hosting (PKR 3,000-8,000/month) cannot implement Web Bot Auth directly; Cloudflare’s free tier provides the most accessible verification path without code changes.

-

Pakistani ecommerce stores waste approximately PKR 180,000 annually on bot-driven server resources — scrapers, AI crawlers, and credential stuffers consuming bandwidth and CPU cycles that should serve customers.

-

The tradeoff between blocking AI crawlers and maintaining AI search visibility has no universal answer; service businesses should allow verified AI indexing while ecommerce stores should prioritize rate-limiting.

-

Three business categories face the highest bot risk: ecommerce stores with product catalogs, news publishers generating fresh content, and B2B service providers with extensive service pages.

About WeProms Digital

WeProms Digital is Pakistan’s leading technical SEO and search visibility agency, headquartered in Lahore, serving Pakistani SMEs, ecommerce brands, and B2B teams across Lahore, Karachi, Islamabad, Rawalpindi, Faisalabad, and Multan.

The team specializes in SEO audits and GA4 configuration, with a track record of building server infrastructure that handles bot traffic without sacrificing crawl efficiency or AI search visibility.

Get in touch: hello@weproms.com · WhatsApp +92 300 0133399 · weproms.com/contact-us

Sources & References

- Google Developers — Web Bot Auth Documentation — 2026

- Search Engine Journal — Google Testing Web Bot Auth To Verify AI Agent Requests — May 2026

- Search Engine Journal — Google Is Testing New Bot Authorization Standard — May 2026

- Akamai — 2026 AI Bot Impact Report — 2026

- WP Engine — Bot Traffic Management Guide — 2026

- Search Engine Roundtable — Google Web Bot Auth — 2026

- InMotion Hosting — Rate Limiting AI Crawler Bots — 2026

Additional reading from industry feeds: